Dot #1: Input. In order to operate any sort of computer, you need to provide it with the information floating around in your brain.

Dot #2: Display. In order to process the information that you’re pouring into the computer, you need to see, hear, or otherwise sense your work-in-progress.

Dot #3: Storage. Whatever you input and display, you need to be able to keep it, and, change it. Also, it would be best if there was a second copy, preferably somewhere safe.

Dot #4: Connection and Sharing. Seems as though every 21st century device needs to be able to send, receive, and share information, often in a collaborative way.

Dot #5: Output. In some ways, this concept is losing relevance. Once displayed, stored and shared, the need to generate anything beyond a screen image is beginning to seem very twentieth century. But it’s still around and it needs to be part of the package.

Dot #6: Portable. Truly portable devices must be sufficiently small and lightweight, serve the other needs in dots 1-5, and also, carry or collect their own power, preferably sufficient for a full day’s (or a full week’s use) between refueling stops.

Let’s take these ideas one at a time and see where the path leads.

Dot #1: Input. Basically, the “man-machine” interface can be achieved in about five different mays. Mostly, these days, we use our hands, and in particular, our fingertips, and to date, this has served us well both on keyboards (which require special skill and practice, but seem to keep pace with the speed of thinking in detail), and on touch screens (which are not yet perfect, but tend to be surprisingly good if the screen is large enough). ThinkGeek sells a tiny Bluetooth projector that displays a working keyboard on any surface.

There is the often under-rated Wacom tablets, which use a digital pen, but this, like a trackpad, requires abstract thinking–draw here, and the image appears there. It’s better, more efficient, and ultimately, probably more precise, to use a stylus directly on the display surface. So far, touch screens are the best we can do. Insofar as portable computing goes, this is probably a good thing because the combination of input (Dot #1) and display (Dot #2) reduce weight, and allow the user direct interaction with the work.

This combination is becoming popular not only on tablets (and phones), but on newer touch-screen laptops, such as the HP Envy x2 (visit Staples to try similar models). The combination is useful on a computer, but more successfully deployed on a tablet because the tablet can be more easily manipulated–brought closer to the eyes, handled at convenient angles, and so on.

Moving from the fingers to other body parts, speaking with a computer has always seemed like a good idea. In practice, Dragon’s voice recognition works, as does Siri, both based upon language pattern recognition developed by Ray Kurzweil. So far, there are limitations, and most are made more challenging by the needs of of a mobile user: a not-quiet environment, the need for a reliable microphone and digital processing with superior sensitivity and selectivity, artificial intelligence superior to the auto-correct feature on mobile systems–lots to consider, which makes me think voice will be a secondary approach.

Eyes are more promising. Some digital cameras read movement in the eye (retinal scanning), but it’s difficult to input words or images this way–the science has a ways to go. The intersection between Google Glass and eye movement is also promising, but early stage. Better still would be some form of direct brain output–thinking generates electrical impulses, but we’re not yet ready to transmit or decode those impulses into messages suitable for input into a digital device. This is coming, but probably not for a decade or two. Also, keep an eye on the glass industry–innovation will lead us to devices that are flexible, lightweight, and surprising in other ways.

So: the best solution, although still improving, is probably the combination tablet design with a touch-screen display, supported, as needed on an individual basis, by some sort of keyboard, mouse, stylus, or other device for convenience or precision.

(BTW: Wikipedia’s survey of input systems is excellent.)

As for display, projection is an interesting idea, but lumens (brightness) and the need for a proper surface are limiting factors. I have more confidence in a screen whose size can be adjusted. (If you’re still thinking in terms of an inflexible, rigid glass rectangle, you might reconsider and instead think about something thinner, perhaps foldable or rollable, if that’s a word.

Dot #3: Storage has already been transformed. For local storage, we’re moving away from spinning disks (however tiny) and into solid state storage. This is the secret behind the small size of the Apple MacBook Air, and all tablets. These devices demand less power, and they respond very, very quickly to every command. They are not easily swapped out for larger storage devices, but they can be easily enhanced with SD cards (size, speed, and storage capacity vary). Internal “SSD” (Solid State Device) storage will continue to increase in size and decrease in cost, so this path seems likely to be the one we follow for the foreseeable future. Add cloud storage, which is inexpensive, mostly reliable (we think), mostly private and secure (we think), the opportunity for low-cost storage for portable devices becomes that much richer. Of course, the latter requires a connection to Dot #4: Storage. Connecting these two dots is the core of Google’s Chrome strategy.

![(Copyright 2006 by Zelphics [Apple Bushel])](https://diginsider.com/wp-content/uploads/2013/03/apple_bushel.png?w=660)

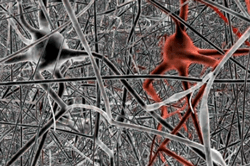

Much of Kurzweil’s theory grows from his advanced understanding of pattern recognition, the ways we construct digital processing systems, and the (often similar) ways that the neocortex seems to work (nobody is certain how the brain works, but we are gaining a lot of understanding as result of various biological and neurological mapping projects). A common grid structure seems to be shared by the digital and human brains. A tremendous number of pathways turn or or off, at very fast speeds, in order to enable processing, or thought. There is tremendous redundancy, as evidenced by patients who, after brain damage, are able to relearn but who place the new thinking in different (non-damaged) parts of the neocortex.

Much of Kurzweil’s theory grows from his advanced understanding of pattern recognition, the ways we construct digital processing systems, and the (often similar) ways that the neocortex seems to work (nobody is certain how the brain works, but we are gaining a lot of understanding as result of various biological and neurological mapping projects). A common grid structure seems to be shared by the digital and human brains. A tremendous number of pathways turn or or off, at very fast speeds, in order to enable processing, or thought. There is tremendous redundancy, as evidenced by patients who, after brain damage, are able to relearn but who place the new thinking in different (non-damaged) parts of the neocortex.