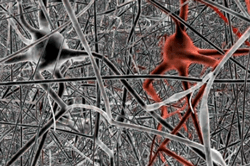

Diffusion spectrum image shows brain wiring in a healthy human adult. The thread-like structures are nerve bundles, each containing hundreds of thousands of nerve fibers.

Source: Source: Van J. Wedeen, M.D., MGH/Harvard U. To learn more about the government’s new connectome project, click on the brain.

You may recall recent coverage of a major White House initiative: mapping the brain. In that statement, there is ambiguity. Do we mean the brain as a body part, or do we mean the brain as the place where the mind resides? Mapping the genome–the sequence of the four types of molecules (nucleotides) that compose your DNA–is so far along that it will soon be possible, for a very reasonable price, to purchase your personal genome pattern.

A connectome is, in the words of the brilliantly clear writer and MIT scientist, Sebastian Seung, is: “the totality of connections between the neurons in [your] nervous system.” Of course, “unlike your genome, which is fixed from the moment of conception, your connectome changes throughout your life. Neurons adjust…their connections (to one another) by strengthening or weakening them. Neurons reconnect by creating and eliminating synapses, and they rewire by growing and retracting branches. Finally, entirely new neurons are created and existing ones are eliminated, through regeneration.”

In other words, the key to who we are is not located in the genome, but instead, in the connections between our brain cells–and those connections are changing all the time.The brain, and, by extension, the mind, is dynamic, constantly evolving based upon both personal need and stimuli.

With his new book, the author proposes a new field of science for the study of the connectome, the ways in which the brain behaves, and the ways in which we might change the way it behaves in new ways. It isn’t every day that I read a book in which the author proposes a new field of scientific endeavor, and, to be honest, it isn’t every day that I read a book about anything that draws me back into reading even when my eyes (and mind) are too tired to continue. “Connectome” is one of those books that is so provocative, so inherently interesting, so well-written, that I’ve now recommended it to a great many people (and now, to you as well).

With his new book, the author proposes a new field of science for the study of the connectome, the ways in which the brain behaves, and the ways in which we might change the way it behaves in new ways. It isn’t every day that I read a book in which the author proposes a new field of scientific endeavor, and, to be honest, it isn’t every day that I read a book about anything that draws me back into reading even when my eyes (and mind) are too tired to continue. “Connectome” is one of those books that is so provocative, so inherently interesting, so well-written, that I’ve now recommended it to a great many people (and now, to you as well).

Seung is at his best when exploring the space between brain and mind, the overlap between how the brain works and how thinking is made possible. For example, he describes how the idea of Jennifer Aniston, a job that is done not by one neuron, but by a group of them, each recognizing a specific aspect of what makes Jennifer Jennifer. Blue eyes. Blonde hair. Angular chin. Add enough details and the descriptors point to one specific person. The neurons put the puzzle together and trigger a response in the brain (and the mind). What’s more, you need not see Jennifer Aniston. You need only think about her and the neurons respond. And the connection between these various neurons is strengthened, ready for the next Jennifer thought. The more you think about Jennifer Aniston, the more you think about Jennifer Aniston.

From here, it’s a reasonable jump to the question of memory. As Seung describes the process, it’s a matter of strong neural connections becoming even stronger through additional associations (Jennifer and Brad Pitt, for example), repetition (in all of those tabloids?), and ordering (memory is aided by placing, for example, the letters of the alphabet in order). No big revelations here–that’s how we all thought it worked–but Seung describes the ways in which scientists can now measure the relative power (the “spike”) of the strongest impulses. Much of this comes down to the image resolution finally available to long-suffering scientists who had the theories but not the tools necessary for confirmation or further exploration.

Next stop: learning. Here, Seung focuses on the random impulses first experienced by the neurons, and then, through a combination of repetition of patterns (for example), a bird song emerges. Not quickly, nor easily, but as a result of (in the case of the male zebra finches he describes in an elaborate example) of tens of thousands of attempts, the song emerges and can then be repeated because the neurons are, in essence, properly aligned. Human learning has its rote components, too, but our need for complexity is greater, and so, the connectome and its network of connections is far more sophisticated, and measured in far greater quantities, than those of a zebra finch. In both cases, the concept of a chain of neural responses is the key.

From here, the book becomes more appealing, perhaps, to fans of certain science fiction genres. Seung becomes fascinated with the implications of cryonics, or the freezing of a brain for later use. Here, he covers some of the territory familiar from Ray Kurzweil’s “How to Create a Mind” (recently, a topic of an article here). The topic of fascination: 0nce we understand the brain and its electrical patterns, is it possible to save those patterns of impulses in some digital device for subsequent sharing and/or retrieval? I found myself less taken with this theoretical exploration than the heart and soul of, well, the brain and mind that Seung explains so well. Still, this is what we’re all wondering: at what point does human brain power and computing brain power converge? And when they do, how much control will we (as opposed to, say Amazon or Google) exert over the future of what we think, what’s important enough to save, and what we hope to accomplish.

![(Copyright 2006 by Zelphics [Apple Bushel])](https://diginsider.com/wp-content/uploads/2013/03/apple_bushel.png?w=660)

Much of Kurzweil’s theory grows from his advanced understanding of pattern recognition, the ways we construct digital processing systems, and the (often similar) ways that the neocortex seems to work (nobody is certain how the brain works, but we are gaining a lot of understanding as result of various biological and neurological mapping projects). A common grid structure seems to be shared by the digital and human brains. A tremendous number of pathways turn or or off, at very fast speeds, in order to enable processing, or thought. There is tremendous redundancy, as evidenced by patients who, after brain damage, are able to relearn but who place the new thinking in different (non-damaged) parts of the neocortex.

Much of Kurzweil’s theory grows from his advanced understanding of pattern recognition, the ways we construct digital processing systems, and the (often similar) ways that the neocortex seems to work (nobody is certain how the brain works, but we are gaining a lot of understanding as result of various biological and neurological mapping projects). A common grid structure seems to be shared by the digital and human brains. A tremendous number of pathways turn or or off, at very fast speeds, in order to enable processing, or thought. There is tremendous redundancy, as evidenced by patients who, after brain damage, are able to relearn but who place the new thinking in different (non-damaged) parts of the neocortex.